Project summary :

The implementation of complex strategies in compact circuits remains a major challenge in the design of autonomous devices. This project addresses the following questions: How are exploration strategies implemented in real biological neuronal circuits and how do such circuits maintain their stability amid high noise levels?

We will focus on exploration/exploitation trade-offs and context-responsive behaviour tasks using tasks that mimic biological learning principles and neuromodulation. Artificial neural networks with biologically-inspired dynamics will be trained and progressively pruned to match the scale of neural circuits identified within the Drosophila larvae, facilitating comparative analysis between artificial and biological networks.

Required skills:

This project will involve a significant computational component. We strongly recommend a background in machine learning and coding. Applicants with a background in areas such as computational neuroscience, reinforcement learning, or deep neural networks are encouraged to apply. Candidates from related fields in machine learning, applied mathematics, or physics are also welcome.

Duration :

We are seeking an M2 intern for a minimum duration of 4 months.

Phd possibility :

This internship may lead to a PhD position for motivated candidates.

Contact :

dbc-epi-recrutement@pasteur.fr

References :

[1]: C. Eschbach et al, Nature Neuroscience, 2020

[2]: T. Jovanic et al, Cell, 2016

[3]: J Mouret, arXiv 2015

[4]: B.R. Cowley et al, Nature, 2024

[5]: G. Fang et al, IEEE/CVF 2023

[6] W. Gerstner et al, Cambridge, 2002

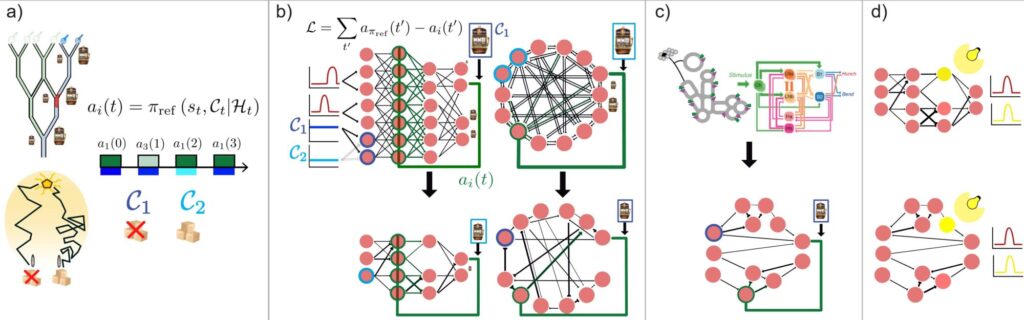

a) Standard larva experiments will be modeled by classic or contextual multi-armed bandits, where each action a_i(t) will induce a specific reward, potentially dependent on context and noisy. Established artificial decision strategies will serve as benchmarks to be embedded in highly-compact circuits. b) Networks will be initialized based on standard artificial network structures, such as fully connected feedforward or recurrent networks. Nodes, interpreted as neurons, will be assigned a biologically inspired dynamic, receiving noisy stimuli as well as contextual modulations. A combination of output neuron activities would select the chosen action. The reward would subsequently modulate target neurons in the circuit by modifying synaptic weights [1], by adjusting firing thresholds, or with adaptive leaky integrate-and-fire models in absence of learning [6]. These networks will be progressively pruned to converge towards statistics observed in the larva circuits. Effectiveness L would be measured via the reference strategy.c) Equivalently, circuits identified as controlling similar tasks [1], [2] would also be considered and trained but without reducing their size. d) It will also be possible to reproduce optogenetic experiments to mimic knockouts and compare the responses of artificially and biologically trained circuits [4].